NVIDIA ConnectX-7 MCX755106AS-HEAT NDR 400Gb/s इन्फिनिबँड स्मार्ट अडॅप्टर

उत्पाद विवरण:

| ब्रांड नाम: | Mellanox |

| मॉडल संख्या: | MCX755106AS-HEAT (900-9x7AH-00788-DTZ) |

| दस्तावेज़: | Connectx-7 infiniband.pdf |

भुगतान & नौवहन नियमों:

| न्यूनतम आदेश मात्रा: | 1 टुकड़ा |

|---|---|

| मूल्य: | Negotiate |

| पैकेजिंग विवरण: | बाहरी डिब्बा |

| प्रसव के समय: | इन्वेंट्री के आधार पर |

| भुगतान शर्तें: | टी/टी |

| आपूर्ति की क्षमता: | परियोजना/बैच द्वारा आपूर्ति |

|

विस्तार जानकारी |

|||

| प्रतिरूप संख्या।: | MCX755106AS-HEAT (900-9x7AH-00788-DTZ) | बंदरगाहों: | 2-पोर्ट |

|---|---|---|---|

| तकनीकी: | अनिद्रा | इंटरफ़ेस प्रकार: | OSFP56 |

| विनिर्देश: | 16.7 सेमी x 6.9 सेमी | मूल: | भारत / इज़राइल / चीन |

| MOQ: | 200GBE | होस्ट इंटरफ़ेस: | Gen3 X16 |

| प्रमुखता देना: | NVIDIA ConnectX-7 इन्फिनिबँड अडॅप्टर,400Gb/s मेलानॉक्स नेटवर्क कार्ड,NDR सपोर्टसह स्मार्ट अडॅप्टर |

||

उत्पाद विवरण

NVIDIA ConnectX-7 MCX755106AS-HEAT NDR 400Gb/s InfiniBand स्मार्ट एडॉप्टर

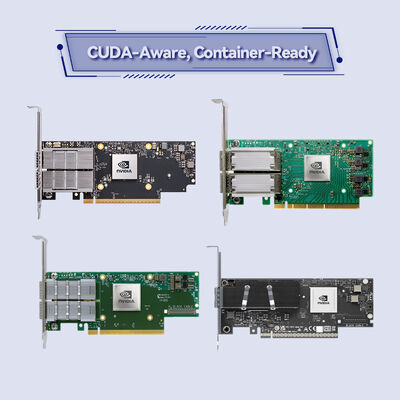

फ्लैगशिप मॉडल PCIe Gen5 x16 InfiniBand और RoCE डुअल-पोर्ट 400G। AI फ़ैक्टरी, HPC क्लस्टर और हाइपरस्केल क्लाउड डेटा सेंटर के लिए डिज़ाइन किया गया अल्ट्रा-लो लेटेंसी 400Gb/s RDMA नेटवर्क एडॉप्टर। ConnectX-7 MCX755106AS-HEAT इन-नेटवर्क कंप्यूटिंग, हार्डवेयर सुरक्षा ऑफ़लोड और उन्नत वर्चुअलाइजेशन को एकीकृत करता है ताकि आधुनिक वैज्ञानिक कंप्यूटिंग और सॉफ़्टवेयर-परिभाषित इन्फ्रास्ट्रक्चर को गति दी जा सके।

उत्पाद अवलोकन

NVIDIA ConnectX-7 परिवार प्रति पोर्ट 400Gb/s तक के बैंडविड्थ के साथ अभूतपूर्व प्रदर्शन प्रदान करता है, जो InfiniBand (NDR/HDR/EDR) और ईथरनेट (400GbE तक) दोनों का समर्थन करता है। मॉडल MCX755106AS-HEAT PCIe Gen5 होस्ट इंटरफ़ेस (32 लेन तक), डुअल-पोर्ट 400Gb/s घनत्व, मल्टी-होस्ट क्षमता, और GPUDirect RDMA, NVMe-oF त्वरण, और इनलाइन क्रिप्टोग्राफी के लिए उन्नत इंजन की सुविधा देता है। मांग वाले AI प्रशिक्षण, सिमुलेशन और रीयल-टाइम एनालिटिक्स के लिए निर्मित, यह एडॉप्टर CPU ओवरहेड को कम करता है, जबकि डेटा थ्रूपुट और सुरक्षा को अधिकतम करता है।

रेंडेज़वस ऑफ़लोड, SHARP कलेक्टिव एक्सेलेरेशन, और ASAP2 SDN ऑफ़लोड के लिए ऑन-बोर्ड मेमोरी के साथ, ConnectX-7 मानक सर्वर को लगभग शून्य जिटर और नैनोसेकंड-परिशुद्धता टाइमिंग (IEEE 1588v2 क्लास C) के साथ उच्च-प्रदर्शन नेटवर्क नोड्स में बदल देता है।

मुख्य विशेषताएं

- NDR InfiniBand और 400GbE तैयार - प्रति पोर्ट 400Gb/s तक, डुअल-पोर्ट कॉन्फ़िगरेशन 800Gb/s एग्रीगेट बैंडविड्थ प्रदान करता है; NDR, HDR, EDR InfiniBand और 400/200/100/50/25/10GbE का समर्थन करता है।

- PCIe Gen5 x16 (32 लेन तक) - TLP प्रोसेसिंग हिंट्स, ATS, PASID, और SR-IOV के साथ हाई-थ्रूपुट होस्ट इंटरफ़ेस।

- इन-नेटवर्क कंप्यूटिंग - कलेक्टिव ऑपरेशंस (SHARP), रेंडेज़वस प्रोटोकॉल, बर्स्ट बफर ऑफ़लोड का हार्डवेयर ऑफ़लोड।

- GPUDirect RDMA और GPUDirect स्टोरेज - डायरेक्ट GPU-टू-NIC डेटा पाथ, डीप लर्निंग और डेटा एनालिटिक्स को गति देता है।

- हार्डवेयर सुरक्षा इंजन - इनलाइन IPsec/TLS/MACsec एन्क्रिप्शन/डिक्रिप्शन (AES-GCM 128/256-बिट) + हार्डवेयर रूट-ऑफ-ट्रस्ट के साथ सुरक्षित बूट।

- उन्नत स्टोरेज त्वरण - NVMe-oF (ओवर फैब्रिक्स/TCP), NVMe/TCP ऑफ़लोड, T10-DIF सिग्नेचर हैंडओवर, iSER, RDMA पर NFS, SMB डायरेक्ट।

- ASAP2 SDN और VirtIO त्वरण - OVS ऑफ़लोड, VXLAN/GENEVE/NVGRE एनकैप्सुलेशन, कनेक्शन ट्रैकिंग, और प्रोग्रामेबल पार्सर।

- परिशुद्धता टाइमिंग - 12ns सटीकता के साथ PTP (IEEE 1588v2), SyncE, टाइम-ट्रिगर शेड्यूलिंग, पैकेट पेसिंग।

प्रौद्योगिकी: ConnectX-7 के अंदर

7nm प्रक्रिया पर निर्मित, ConnectX-7 कई हार्डवेयर त्वरण इंजन को एकीकृत करता है जो CPU को ऑफ़लोड करते हैं और नियतात्मक प्रदर्शन प्रदान करते हैं। प्रमुख तकनीकी स्तंभों में शामिल हैं:

- कन्वर्ज्ड ईथरनेट पर RDMA (RoCE) - लो-लेटेंसी ईथरनेट फैब्रिक्स के लिए ज़ीरो-टच RoCE।

- डायनामिकली कनेक्टेड ट्रांसपोर्ट (DCT) और XRC - कुशल MPI और HPC संचार।

- ऑन-डिमांड पेजिंग (ODP) और यूजर मेमोरी रजिस्ट्रेशन (UMR) - बड़े पैमाने पर अनुप्रयोगों के लिए मेमोरी प्रबंधन को सरल बनाता है।

- स्केलेबल हायरार्किकल एग्रीगेशन एंड रिडक्शन प्रोटोकॉल (SHARP) - MPI कलेक्टिव के लिए इन-नेटवर्क डेटा रिडक्शन।

- मल्टी-होस्ट टेक्नोलॉजी - सर्वर उपयोग को अनुकूलित करते हुए एक एडॉप्टर साझा करने के लिए 4 स्वतंत्र होस्ट तक सक्षम करता है।

- PLDM और SPDM प्रबंधनीयता - एंटरप्राइज़ सुरक्षा के लिए फर्मवेयर अपडेट, निगरानी और डिवाइस प्रमाणन।

विशिष्ट परिनियोजन

- AI और मशीन लर्निंग क्लस्टर - NCCL, UCX, और GPUDirect RDMA के साथ बड़े पैमाने पर प्रशिक्षण।

- HPC सिमुलेशन और अनुसंधान - लो-लेटेंसी MPI की आवश्यकता वाली मौसम मॉडलिंग, जीनोमिक्स, आणविक गतिशीलता।

- हाइपरस्केल क्लाउड और SDDC - ओवरले नेटवर्किंग, NFV त्वरण, सुरक्षित मल्टी-टेनेन्सी (SR-IOV)।

- एंटरप्राइज़ स्टोरेज सिस्टम - NVMe-oF टारगेट ऑफ़लोड और डिस्ट्रिब्यूटेड फ़ाइल सिस्टम (Lustre, GPUDirect Storage)।

- 5G एज और टेलीकॉम - क्लास C PTP और MACsec सुरक्षा के साथ समय-संवेदनशील इन्फ्रास्ट्रक्चर।

संगतता

ऑपरेटिंग सिस्टम और वर्चुअलाइजेशन: लिनक्स (RHEL, Ubuntu, Rocky Linux), Windows Server, VMware ESXi (SR-IOV), और Kubernetes (CNI प्लगइन्स) के लिए इन-बॉक्स ड्राइवर। NVIDIA HPC-X, UCX, OpenMPI, MVAPICH, MPICH, OpenSHMEM, NCCL, और UCC के लिए अनुकूलित।

हार्डवेयर संगतता: मानक PCIe Gen5 स्लॉट (x16 मैकेनिकल, x16/x32 इलेक्ट्रिकल)। HPE, Supermicro, और NVIDIA DGX सिस्टम से प्रमुख सर्वर प्लेटफार्मों के साथ प्रमाणित।

इंटरऑपरेबिलिटी: InfiniBand ट्रेड एसोसिएशन स्पेक 1.5, ईथरनेट के लिए IEEE 802.3, और PCI-SIG Gen5 विनिर्देशों के साथ पूरी तरह से अनुपालन।

विनिर्देश - ConnectX-7 MCX755106AS-HEAT

| पैरामीटर | विवरण |

|---|---|

| उत्पाद मॉडल | MCX755106AS-HEAT |

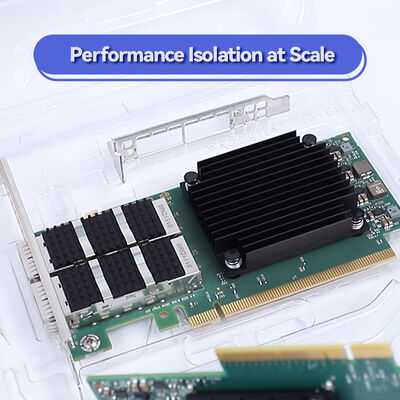

| फॉर्म फैक्टर | PCIe HHHL (हाफ हाइट हाफ लेंथ), FHHL ब्रैकेट वैकल्पिक |

| होस्ट इंटरफ़ेस | PCIe Gen5.0 x16 (32 लेन तक, बायफर्केशन और मल्टी-होस्ट का समर्थन) |

| नेटवर्क प्रोटोकॉल | InfiniBand (NDR/HDR/EDR) और ईथरनेट (400GbE, 200GbE, 100GbE, 50GbE, 25GbE, 10GbE) |

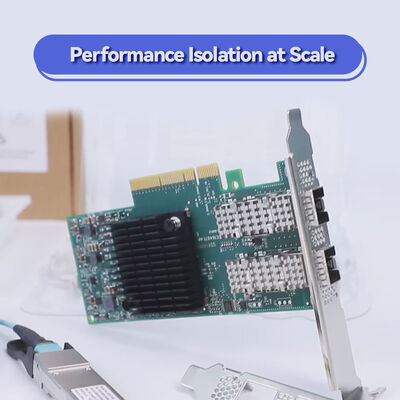

| पोर्ट कॉन्फ़िगरेशन | डुअल-पोर्ट QSFP-DD (2x 400Gb/s NDR, 800Gb/s एग्रीगेट) |

| InfiniBand स्पीड | NDR 400Gb/s प्रति पोर्ट, HDR 200Gb/s, EDR 100Gb/s, FDR (संगत) |

| ईथरनेट स्पीड | 400/200/100/50/25/10GbE NRZ/PAM4 |

| ऑन-बोर्ड मेमोरी | रेंडेज़वस ऑफ़लोड और बर्स्ट बफर के लिए एकीकृत इन-नेटवर्क मेमोरी |

| सुरक्षा ऑफ़लोड | इनलाइन IPsec, TLS, MACsec (AES-GCM 128/256-बिट), सिक्योर बूट, फ्लैश एन्क्रिप्शन |

| स्टोरेज ऑफ़लोड | NVMe-oF (TCP/फैब्रिक्स), NVMe/TCP, T10-DIF, SRP, iSER, RDMA पर NFS, SMB डायरेक्ट |

| टाइमिंग और सिंक | IEEE 1588v2 PTP (12ns सटीकता), SyncE, प्रोग्रामेबल PPS, टाइम-ट्रिगर शेड्यूलिंग |

| वर्चुअलाइजेशन | SR-IOV, VirtIO त्वरण, VXLAN/NVGRE/GENEVE ऑफ़लोड, कनेक्शन ट्रैकिंग (L4 फ़ायरवॉल) |

| प्रबंधनीयता | NC-SI, SMBus/PCIe पर MCTP, PLDM (मॉनिटर/फर्मवेयर/FRU/Redfish), SPDM, SPI फ्लैश, JTAG |

| रिमोट बूट | InfiniBand बूट, iSCSI, UEFI, PXE |

| बिजली की खपत | सार्वजनिक रूप से निर्दिष्ट नहीं - डुअल-पोर्ट हाई-परफॉरमेंस एडॉप्टर के लिए पर्याप्त एयरफ्लो की आवश्यकता होती है; कृपया ऑर्डर करने से पहले पुष्टि करें |

| ऑपरेटिंग तापमान | 0°C से 55°C (उचित चेसिस कूलिंग के साथ) |

नोट: कुछ पैरामीटर फर्मवेयर और सिस्टम कॉन्फ़िगरेशन के आधार पर भिन्न हो सकते हैं। विशिष्ट सत्यापन के लिए NVIDIA दस्तावेज़ीकरण से परामर्श करें या Starsurge से संपर्क करें।

लाभ - आधुनिक डेटा सेंटर के लिए निर्मित

सबसे कम कुल स्वामित्व लागत नेटवर्किंग, स्टोरेज और सुरक्षा कार्यों से CPU को ऑफ़लोड करता है -- प्रति Gb/s बिजली और कूलिंग लागत को कम करता है।

भविष्य के लिए तैयार बैंडविड्थ PCIe Gen5 और NDR 400G प्रति पोर्ट अगली पीढ़ी के GPU सर्वर और AI क्लस्टर के लिए बाधाओं को समाप्त करता है।

एंटरप्राइज़ सुरक्षा हार्डवेयर इनलाइन एन्क्रिप्शन (IPsec/TLS/MACsec) और सुरक्षित चेन ऑफ ट्रस्ट अनुपालन (FIPS, DoD) को पूरा करते हैं।

निर्बाध एकीकरण प्रमुख डिस्ट्रो, हाइपरवाइज़र और कंटेनर ऑर्केस्ट्रेशन प्लेटफार्मों के साथ पूर्ण संगतता।

अक्सर पूछे जाने वाले प्रश्न (FAQ)

Q: क्या MCX755106AS-HEAT InfiniBand और ईथरनेट स्विच दोनों के साथ संगत है?

हाँ। ConnectX-7 डुअल-प्रोटोकॉल ऑपरेशन का समर्थन करता है; आप इसे InfiniBand मोड (NDR फैब्रिक) या ईथरनेट मोड (RoCE) में उपयोग कर सकते हैं। एडॉप्टर ऑटो-डिटेक्ट करता है या फर्मवेयर के माध्यम से कॉन्फ़िगर किया जा सकता है।

Q: क्या यह डुअल-पोर्ट मॉडल दोनों पोर्ट पर एक साथ GPUDirect स्टोरेज का समर्थन करता है?

बिल्कुल। GPUDirect स्टोरेज और GPUDirect RDMA दोनों पोर्ट पर पूरी तरह से समर्थित हैं, जिससे CPU बाउंस बफ़र्स के बिना स्टोरेज और GPU मेमोरी के बीच सीधा डेटा मूवमेंट सक्षम होता है।

Q: MCX755106AS-HEAT के लिए कौन से PCIe बायफर्केशन विकल्प उपलब्ध हैं?

यह मानक बायफर्केशन (x16, x8/x8) और NVIDIA मल्टी-होस्ट का समर्थन करता है, जो उचित PCIe स्विच प्लेटफार्मों के साथ 4 स्वतंत्र होस्ट तक सक्षम करता है।

Q: क्या मैं NVMe/TCP ऑफ़लोड के लिए इस एडॉप्टर का उपयोग कर सकता हूँ?

हाँ। NVMe ओवर TCP के लिए हार्डवेयर ऑफ़लोड CPU उपयोग को कम करता है और सॉफ़्टवेयर-परिभाषित स्टोरेज के लिए लेटेंसी में सुधार करता है।

Q: मुझे नवीनतम फर्मवेयर और ड्राइवर कहाँ मिल सकते हैं?

ड्राइवर मुख्यधारा के लिनक्स कर्नेल (MLNX_OFED) में शामिल हैं। NVIDIA के आधिकारिक Mellanox टूल (mlxconfig, flint) फर्मवेयर अपडेट का समर्थन करते हैं। Starsurge क्यूरेटेड फर्मवेयर पैकेज भी प्रदान करता है।

सावधानियां और ऑर्डरिंग नोट्स

- सुनिश्चित करें कि सर्वर मदरबोर्ड पर्याप्त कूलिंग के साथ PCIe Gen5 स्लॉट प्रदान करता है (400G ऑपरेशन के लिए सक्रिय एयरफ्लो अनुशंसित है)।

- मल्टी-होस्ट कॉन्फ़िगरेशन के लिए, प्लेटफ़ॉर्म समर्थन और केबलिंग आवश्यकताओं को सत्यापित करें (स्प्लिटर केबल की आवश्यकता हो सकती है)।

- ऑप्टिकल मॉड्यूल / DAC केबल अलग से बेचे जाते हैं; अनुपालन के लिए NVIDIA-प्रमाणित ट्रांससीवर का उपयोग करें।

- अधिकतम बिजली की खपत NVIDIA द्वारा प्रकाशित नहीं की गई है; विशिष्ट थर्मल डिज़ाइन डुअल-पोर्ट मॉडल के लिए भारी लोड के तहत 30W-40W रेंज मानता है, कृपया अपने चेसिस थर्मल गाइड के साथ मान्य करें।

- क्रिप्टोग्राफ़िक सुविधाओं (IPsec/TLS ऑफ़लोड) के लिए, अतिरिक्त लाइसेंसिंग की आवश्यकता हो सकती है; कृपया बिक्री के साथ पुष्टि करें।

संगतता मैट्रिक्स (सरलीकृत)

| घटक / पारिस्थितिकी तंत्र | समर्थित | नोट्स |

|---|---|---|

| NVIDIA DGX H100 / GH200 | हाँ | डुअल-पोर्ट कॉन्फ़िगरेशन के साथ प्रमाणित |

| VMware vSphere / ESXi | हाँ (SR-IOV) | ड्राइवर समर्थन शामिल है |

| लिनक्स कर्नेल 5.x+ | हाँ (इन-बॉक्स) | MLNX_OFED अनुशंसित |

| Windows Server 2022 | हाँ | नेटिव RDMA / RoCE |

| Kubernetes / CNI | हाँ | Multus, SR-IOV CNI |

| OpenMPI / MVAPICH | हाँ | InfiniBand क्रियाओं के लिए अनुकूलित |

खरीदार चेकलिस्ट - ConnectX-7 एडॉप्शन

- होस्ट प्लेटफ़ॉर्म जांचें: PCIe Gen5 स्लॉट (या Gen4 बैकवर्ड संगतता के साथ लेकिन बैंडविड्थ सीमित)।

- केबल प्रकार की पुष्टि करें: 400G NDR (OSFP/QSFP-DD) या प्रति पोर्ट 2x200G के लिए स्प्लिटर।

- कूलिंग सत्यापित करें: डुअल-पोर्ट हाई-पावर एडॉप्टर के लिए कम से कम 350 LFM एयरफ्लो की आवश्यकता होती है।

- सुरक्षा सुविधाओं के लिए: सुरक्षित बूट और क्रिप्टो ऑफ़लोड की आवश्यकता है या नहीं, इसकी पुष्टि करें (HEAT मॉडल में हार्डवेयर रूट-ऑफ-ट्रस्ट और पूर्ण इनलाइन इंजन शामिल हैं)।

- सॉफ़्टवेयर स्टैक: MLNX_OFED या इनबॉक्स ड्राइवर संस्करण आपके कर्नेल/OS के साथ संगतता।

इस उत्पाद के बारे में अधिक जानकारी जानना चाहते हैं