NVIDIA क्वांटम MQM8700-HS2F 200G InfiniBand स्विच 40-पोर्ट 16Tb/s

उत्पाद विवरण:

| ब्रांड नाम: | Mellanox |

| मॉडल संख्या: | MQM8700-HS2F (920-9B110-00FH-0MD) |

| दस्तावेज़: | MQM8700 series.pdf |

भुगतान & नौवहन नियमों:

| न्यूनतम आदेश मात्रा: | 1 टुकड़ा |

|---|---|

| मूल्य: | Negotiate |

| पैकेजिंग विवरण: | बाहरी डिब्बा |

| प्रसव के समय: | इन्वेंट्री के आधार पर |

| भुगतान शर्तें: | टी/टी |

| आपूर्ति की क्षमता: | परियोजना/बैच द्वारा आपूर्ति |

|

विस्तार जानकारी |

|||

| नमूना: | MQM8700-HS2F (920-9B110-00FH-0MD) | स्थिति: | नया और मौलिक |

|---|---|---|---|

| अपलिंक कनेक्टिविटी: | 200 जीबीपीएस | कीवर्ड: | मेलानॉक्स नेटवर्क स्विच |

| THROUGHPUT: | 16 टीबी/एस | अनुपालन: | ROHS-6, CE, FCC, ISO, ETS |

| प्रमुखता देना: | एनवीडिया इन्फिनिबैंड स्विच 200जी,मेलनॉक्स नेटवर्क स्विच 40-पोर्ट,क्वांटम MQM8700 स्विच 16Tb/s |

||

उत्पाद विवरण

उच्च-प्रदर्शन निश्चित-कॉन्फ़िगरेशन स्विच जो 200Gb/s के 40 पोर्ट (या 100Gb/s के 80 पोर्ट) 16 Tb/s नॉन-ब्लॉकिंग थ्रूपुट, इन-नेटवर्क कंप्यूटिंग त्वरण, और अल्ट्रा-लो लेटेंसी के साथ प्रदान करता है - HPC, AI क्लस्टर और हाइपरस्केल डेटा सेंटर के लिए विशेष रूप से निर्मित।

NVIDIA क्वांटम QM8700 सीरीज़ (MQM8700-HS2F सहित) 200G InfiniBand स्मार्ट स्विच का एक नया वर्ग है, जिसे AI, उच्च-प्रदर्शन कंप्यूटिंग और क्लाउड स्टोरेज वातावरण में बाधाओं को दूर करने के लिए डिज़ाइन किया गया है। कॉम्पैक्ट 1U फॉर्म फैक्टर में चालीस 200Gb/s पोर्ट तक के साथ, स्विच 16 Tb/s एग्रीगेट थ्रूपुट प्रदान करता है जिसमें 130 नैनोसेकंड से कम कट-थ्रू लेटेंसी होती है। NVIDIA के स्केलेबल InfiniBand आर्किटेक्चर पर निर्मित, QM8700-HS2F में एक एम्बेडेड x86 डुअल-कोर प्रोसेसर, एकीकृत सबनेट मैनेजर (2,000 नोड्स तक), और NVIDIA SHARP™ तकनीक के लिए समर्थन है, जो सर्वर से नेटवर्क फैब्रिक तक संचार को ऑफलोड करके कलेक्टिव ऑपरेशंस को गति प्रदान करता है।

अत्यधिक लचीलेपन के लिए डिज़ाइन किया गया, प्रत्येक 200Gb/s QSFP56 पोर्ट को दो स्वतंत्र 100Gb/s पोर्ट में विभाजित किया जा सकता है, जिससे घने टॉप-ऑफ-रैक डिप्लॉयमेंट के लिए रेडिक्स दोगुना हो जाता है। QM8700-HS2F वेरिएंट (P2C एयरफ्लो) MLNX-OS® चलाने वाला एक पूरी तरह से प्रबंधित स्विच है, जो उन उद्यमों के लिए आदर्श है जो सरल आउट-ऑफ-द-बॉक्स प्रबंधन, CLI, WebUI, SNMP, और JSON API के साथ उच्च-प्रदर्शन नेटवर्किंग की तलाश में हैं।

- 200Gb/s InfiniBand प्रति पोर्ट– चालीस QSFP56 पोर्ट जो 200G या 100G स्प्लिट मोड, नॉन-ब्लॉकिंग आर्किटेक्चर का समर्थन करते हैं।

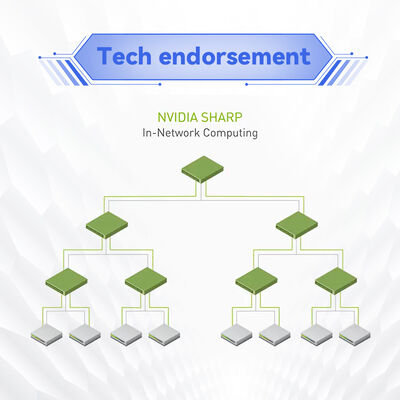

- इन-नेटवर्क कंप्यूटिंग एक्सेलेरेशन– NVIDIA SHARP™ तकनीक इन-स्विच डेटा एग्रीगेशन को सक्षम करती है, MPI, NCCL, और SHMEM संचार समय को कई गुना कम करती है।

- उच्च रेडिक्स और स्प्लिट क्षमता– 40x 200G पोर्ट को 80x 100G पोर्ट में बदलें, अतिरिक्त स्विच के बिना डबल-डेंसिटी टोपोलॉजी के लिए।

- उन्नत कंजेशन प्रबंधन– हॉट स्पॉट को खत्म करने और प्रभावी फैब्रिक बैंडविड्थ को अधिकतम करने के लिए एडॉप्टिव रूटिंग, स्टैटिक रूटिंग और क्वालिटी ऑफ सर्विस (QoS)।

- एकीकृत सबनेट मैनेजर– ऑन-बोर्ड SM 2,000 नोड्स तक का समर्थन करता है त्वरित क्लस्टर ब्रिंग-अप के लिए; MLNX-OS के माध्यम से पूरी तरह से प्रबंधित।

- रिडंडेंट और हॉट-स्वैपेबल PSU– 1+1 रिडंडेंट पावर, 80 प्लस गोल्ड प्रमाणित, ENERGY STAR कंप्लायंट, आंशिक पोर्ट उपयोग पर पावर ऑप्टिमाइज़ेशन के साथ।

- व्यापक प्रबंधन– CLI, WebUI, SNMP, JSON, साथ ही बाहरी उन्नत फैब्रिक ऑर्केस्ट्रेशन के लिए वैकल्पिक UFM™ (MQM8790 वेरिएंट)।

- पिछला संगत– InfiniBand की पिछली पीढ़ियों (EDR, FDR) के साथ निर्बाध इंटरऑपरेबिलिटी।

पारंपरिक स्विचों के विपरीत जो केवल पैकेट फॉरवर्ड करते हैं, NVIDIA क्वांटम स्विच स्केलेबल हायरार्किकल एग्रीगेशन और रिडक्शन प्रोटोकॉल (SHARP) इंजन को सीधे सिलिकॉन में एम्बेड करते हैं। स्विच से गुजरने वाले डेटा को सर्वर एंडपॉइंट्स तक कई राउंड-ट्रिप के बिना संसाधित किया जा सकता है - एग्रीगेटेड, रिड्यूस्ड, या ब्रॉडकास्ट किया जा सकता है। यह कलेक्टिव ऑपरेशंस जैसे ऑल-रिड्यूस, बैरियर और ब्रॉडकास्ट को नाटकीय रूप से गति प्रदान करता है, जो डीप लर्निंग फ्रेमवर्क (TensorFlow, PyTorch via NCCL) और MPI-आधारित HPC सिमुलेशन के लिए महत्वपूर्ण हैं। इसका परिणाम संचार-गहन वर्कलोड के लिए 10x तक प्रदर्शन लाभ और कम CPU ओवरहेड है, जिससे वास्तविक एप्लिकेशन प्रोसेसिंग के लिए कंप्यूट संसाधन मुक्त हो जाते हैं।

QM8700-HS2F एडॉप्टिव रूटिंग और कंजेशन कंट्रोल एल्गोरिदम का भी समर्थन करता है जो स्वचालित रूप से कई पाथ पर ट्रैफिक को संतुलित करते हैं, उच्च कंटेंशन के तहत भी लगभग लाइन-रेट थ्रूपुट प्रदान करते हैं।

- AI और मशीन लर्निंग क्लस्टर– बड़े पैमाने पर GPU-आधारित सिस्टम (NVIDIA DGX, HGX) जिन्हें NCCL एक्सेलेरेशन के लिए SHARP के साथ 200G इंटरकनेक्ट की आवश्यकता होती है।

- उच्च-प्रदर्शन कंप्यूटिंग (HPC)– रिसर्च लैब, राष्ट्रीय लैब और विश्वविद्यालय जो MPI वर्कलोड, मौसम सिमुलेशन और कम्प्यूटेशनल फ्लूइड डायनेमिक्स चलाते हैं।

- हाइपरस्केल और क्लाउड डेटा सेंटर– स्केलेबल, उच्च-बाइसेक्शन बैंडविड्थ फैब्रिक के लिए फैट-ट्री, ड्रैगनफ्लाई+, और मल्टी-डायमेंशनल टॉरस टोपोलॉजी।

- एंटरप्राइज और वित्तीय सेवाएँ– अल्ट्रा-लो लेटेंसी ट्रेडिंग प्लेटफॉर्म और डेटाबेस एक्सेलेरेशन जिन्हें अनुमानित नेटवर्क प्रदर्शन की आवश्यकता होती है।

- टॉप-ऑफ-रैक (ToR) और एंड-ऑफ-रो– स्प्लिट पोर्ट क्षमता का उपयोग करके प्रति सर्वर डबल-डेंसिटी 100Gb/s कनेक्टिविटी।

QM8700 सीरीज़ NVIDIA ConnectX-6, ConnectX-7, और BlueField DPU एडेप्टर के साथ निर्बाध रूप से काम करती है, जो InfiniBand और मिश्रित फैब्रिक दोनों का समर्थन करती है। यह पिछली InfiniBand स्पीड (EDR 100Gb/s, FDR 56Gb/s) के साथ पिछला संगत है। मौजूदा NVIDIA क्वांटम फैब्रिक स्विच के साथ पूरी तरह से इंटरऑपरेबल, और टेलीमेट्री और प्रेडिक्टिव मॉनिटरिंग के लिए यूनिफाइड फैब्रिक मैनेजर (UFM) के माध्यम से प्रबंधित। ऑपरेटिंग सिस्टम सपोर्ट में प्रमुख लिनक्स डिस्ट्रिब्यूशन (RHEL, Ubuntu, Rocky Linux) और NVIDIA प्रमाणित GPU सर्वर शामिल हैं।

| पैरामीटर | विवरण |

|---|---|

| मॉडल संख्या | MQM8700-HS2F |

| पोर्ट और स्पीड | 40 QSFP56 पोर्ट; प्रति पोर्ट 200Gb/s तक; 100Gb/s के 80 पोर्ट में विभाजित करने का समर्थन करता है |

| एग्रीगेट थ्रूपुट | 16 Tb/s नॉन-ब्लॉकिंग |

| स्विचिंग लेटेंसी | < 130ns (कट-थ्रू) |

| प्रबंधन | पूरी तरह से प्रबंधित, ऑन-बोर्ड x86 डुअल कोर CPU (Broadwell ComEx D-1508 2.2GHz), 8GB सिस्टम मेमोरी; MLNX-OS, CLI, WebUI, SNMP, JSON, सबनेट मैनेजर एकीकृत |

| बिजली आपूर्ति | 1+1 रिडंडेंट हॉट-स्वैपेबल, 100-127VAC / 200-240VAC, 80 प्लस गोल्ड, ENERGY STAR |

| एयरफ्लो | P2C (पोर्ट-टू-पावर) – MQM8700-HS2F, मानक गहराई |

| आयाम (HxWxD) | 1.7 x 17 x 23.2 इंच (43.6 x 433.2 x 590.6 मिमी), 1U |

| वजन | 2 PSUs के साथ: 12.48 किग्रा / 27.5 पाउंड |

| ऑपरेटिंग तापमान | 0°C से 40°C |

| प्रमाणन | CE, FCC, VCCI, ICES, RCMS, RoHS कंप्लायंट |

| वारंटी | 1 साल की सीमित हार्डवेयर वारंटी (विस्तार योग्य विकल्प उपलब्ध) |

| ऑर्डर करने योग्य पार्ट नंबर (OPN) | विवरण | एयरफ्लो | प्रबंधन |

|---|---|---|---|

| MQM8700-HS2F | NVIDIA क्वांटम 200Gb/s InfiniBand स्विच, 40 QSFP56, डुअल AC PSU, x86 डुअल कोर, मानक गहराई, P2C एयरफ्लो, रेल किट | P2C (पोर्ट टू पावर) | प्रबंधित (MLNX-OS) |

| MQM8700-HS2R | ऊपर जैसा ही लेकिन C2P एयरफ्लो (पावर-टू-पोर्ट) | C2P | प्रबंधित |

| MQM8790-HS2F | अनमैनेज्ड वेरिएंट, P2C एयरफ्लो, UFM एक्सटर्नल मैनेजमेंट के लिए उपयुक्त | P2C | बाहरी रूप से प्रबंधित (UFM रेडी) |

| MQM8790-HS2R | अनमैनेज्ड, C2P एयरफ्लो | C2P | बाहरी रूप से प्रबंधित |

नोट: स्प्लिट केबल का उपयोग करके उच्च-घनत्व 100G डिप्लॉयमेंट के लिए, प्रबंधित और अनमैनेज्ड SKU दोनों समान पोर्ट लचीलापन प्रदान करते हैं। एकीकृत सबनेट प्रबंधन और पूर्ण OS एक्सेस के लिए MQM8700-HS2F चुनें।

- बेहतर ROI– डबल-डेंसिटी 100G पोर्ट क्षमता और बड़े फैब्रिक के लिए कम स्विच काउंट के साथ पूंजीगत व्यय कम करें।

- ऊर्जा कुशल– पोर्ट उपयोग के आधार पर डायनामिक पावर स्केलिंग, परिचालन लागत कम करना।

- SHARP™ एक्सेलेरेशन– होस्ट CPU साइकल का उपभोग किए बिना 10x तक तेज कलेक्टिव कम्युनिकेशन।

- सरलीकृत प्रबंधन– ऑन-बोर्ड सबनेट मैनेजर 2,000 नोड्स तक के क्लस्टर के लिए बाहरी SM सर्वर की आवश्यकता को समाप्त करता है।

- स्केलेबल टोपोलॉजी– फैट ट्री, ड्रैगनफ्लाई+, और टॉरस के लिए मूल समर्थन डेटा सेंटर ग्रोथ को भविष्य-प्रूफ करने के लिए।

- सिद्ध इकोसिस्टम– NVIDIA संचयी सॉफ्टवेयर स्टैक और 24/7 पार्टनर सपोर्ट द्वारा समर्थित।

Starsurge Group NVIDIA क्वांटम स्विच के लिए एंड-टू-एंड लाइफसाइकिल सेवाएं प्रदान करता है, जिसमें प्री-सेल्स आर्किटेक्चर कंसल्टिंग, प्रूफ-ऑफ-कॉन्सेप्ट टेस्टिंग और ग्लोबल लॉजिस्टिक्स शामिल हैं। हमारी अनुभवी तकनीकी टीम रिमोट ट्रबलशूटिंग, फर्मवेयर अपग्रेड और RMA समन्वय प्रदान करती है। वारंटी विस्तार विकल्प और 24x7 प्राथमिकता समर्थन अनुरोध पर उपलब्ध हैं। EMEA, अमेरिका और APAC क्षेत्रों के लिए बहुभाषी समर्थन मिशन-महत्वपूर्ण डिप्लॉयमेंट के लिए त्वरित प्रतिक्रिया सुनिश्चित करता है।

- सुनिश्चित करें कि परिवेश ऑपरेटिंग तापमान 0°C और 40°C के बीच रहे; उचित रैक वेंटिलेशन बनाए रखें।

- केवल NVIDIA संगतता गाइड में सूचीबद्ध योग्य QSFP56 ऑप्टिक्स या DAC केबल का उपयोग करें।

- एयरफ्लो दिशा (P2C या C2P) डेटा सेंटर कूलिंग स्कीम से मेल खानी चाहिए। MQM8700-HS2F P2C (पोर्ट-टू-पावर) का उपयोग करता है।

- बिजली आपूर्ति को उचित AC वोल्टेज (100-240VAC) से ग्राउंडिंग के साथ जोड़ा जाना चाहिए।

- फर्मवेयर अपडेट: MLNX-OS के माध्यम से अपग्रेड करने से पहले हमेशा कॉन्फ़िगरेशन का बैकअप लें।

- वजन ~12.5 किग्रा दो PSUs के साथ – रैक माउंटिंग के लिए उचित यांत्रिक लिफ्ट का उपयोग करें।

Hong Kong Starsurge Group Co., Limited नेटवर्क हार्डवेयर, आईटी सेवाओं और सिस्टम इंटीग्रेशन समाधानों का एक प्रौद्योगिकी-संचालित प्रदाता है। 2008 में स्थापित, कंपनी दुनिया भर में ग्राहकों को नेटवर्क स्विच, NICs, वायरलेस एक्सेस पॉइंट, कंट्रोलर, केबलिंग और इंफ्रास्ट्रक्चर उपकरण सहित उत्पादों के साथ सेवा प्रदान करती है। एक अनुभवी बिक्री और तकनीकी टीम द्वारा समर्थित, Starsurge सरकारी, स्वास्थ्य सेवा, विनिर्माण, शिक्षा, वित्त और एंटरप्राइज जैसे उद्योगों का समर्थन करता है।

ग्राहक-प्रथम दृष्टिकोण के साथ, Starsurge विश्वसनीय गुणवत्ता, उत्तरदायी सेवा और अनुरूप समाधानों पर ध्यान केंद्रित करता है। प्रमुख नेटवर्किंग ब्रांडों के लिए एक अधिकृत भागीदार के रूप में, हम वैश्विक लॉजिस्टिक्स, कस्टम सॉफ्टवेयर डेवलपमेंट और बहुभाषी समर्थन प्रदान करते हैं – ग्राहकों को कुशल, स्केलेबल और भरोसेमंद नेटवर्क इंफ्रास्ट्रक्चर बनाने में मदद करते हैं।

| घटक | समर्थित मॉडल / प्रकार |

|---|---|

| एडेप्टर | NVIDIA ConnectX-6, ConnectX-7, BlueField-2 / BlueField-3 InfiniBand |

| केबल और ऑप्टिक्स | QSFP56 DAC (3m तक पैसिव, 5m तक एक्टिव), AOC, ऑप्टिकल ट्रांसीवर (SR4, LR4) |

| ऑपरेटिंग सिस्टम | Linux (RHEL 8/9, Ubuntu 20.04/22.04, Rocky Linux), InfiniBand स्टैक के साथ Windows Server |

| प्रबंधन प्लेटफ़ॉर्म | MLNX-OS, NVIDIA UFM, SNMP एक्सपोर्टर के माध्यम से Prometheus/Grafana |

| टोपोलॉजी समर्थन | फैट ट्री, ड्रैगनफ्लाई+, 2D/3D टॉरस, स्लिमफ्लाई |

- एयरफ्लो दिशा (MQM8700-HS2F के लिए P2C) आपकी रैक कूलिंग स्कीम से मेल खाती है, इसकी पुष्टि करें।

- आवश्यक पोर्ट स्पीड (200G नेटिव या 100G ब्रेकआउट) और केबल असेंबली प्रकार सत्यापित करें।

- पावर इनपुट जांचें: C13/C14 कनेक्टर के साथ डुअल रिडंडेंट AC।

- सुनिश्चित करें कि रैक की गहराई 23.2 इंच (मानक गहराई) का समर्थन करती है।

- सबनेट मैनेजर के लिए योजना बनाएं: ऑन-बोर्ड SM 2000 नोड्स तक कवर करता है; बड़े क्लस्टर को अतिरिक्त SM इंस्टेंस की आवश्यकता हो सकती है।

- उन्नत सुविधाओं (वैकल्पिक UFM) के लिए सॉफ्टवेयर लाइसेंस आवश्यकताओं को मान्य करें।

- NVIDIA क्वांटम QM9700 सीरीज़ (NDR 400G InfiniBand)

- NVIDIA ConnectX-6 VPI एडेप्टर कार्ड (100Gb/s डुअल-पोर्ट)

- इंफ्रास्ट्रक्चर एक्सेलेरेशन के लिए NVIDIA BlueField-3 DPU

- Starsurge कस्टम रैक इंटीग्रेशन किट और QSFP56 केबल (पैसिव/एक्टिव)

- बड़े पैमाने पर फैब्रिक प्रबंधन के लिए NVIDIA UFM टेलीमेट्री प्लेटफ़ॉर्म